首页 / 爬虫 / <scrapy爬虫>爬取腾讯社招信息

<scrapy爬虫>爬取腾讯社招信息

内容导读

互联网集市收集整理的这篇技术教程文章主要介绍了<scrapy爬虫>爬取腾讯社招信息,小编现在分享给大家,供广大互联网技能从业者学习和参考。文章包含4135字,纯文字阅读大概需要6分钟。

内容图文

1.创建scrapy项目

dos窗口输入:

scrapy startproject tencent

cd tencent

2.编写item.py文件(相当于编写模板,需要爬取的数据在这里定义)

# -*- coding: utf-8 -*-

# Define here the models for your scraped items

#

# See documentation in:

# https://doc.scrapy.org/en/latest/topics/items.html

import scrapy

class TencentItem(scrapy.Item):

# define the fields for your item here like:

#职位名

positionname = scrapy.Field()

#链接

positionlink = scrapy.Field()

#类别

positionType = scrapy.Field()

#招聘人数

positionNum = scrapy.Field()

#工作地点

positioncation = scrapy.Field()

#职位名称

positionTime = scrapy.Field()

3.创建爬虫文件

dos窗口输入:

scrapy genspider myspider tencent.com

4.编写myspider.py文件(接收响应,处理数据)

# -*- coding: utf-8 -*-

import scrapy

from tencent.items import TencentItem

class MyspiderSpider(scrapy.Spider):

name = ‘myspider‘

allowed_domains = [‘tencent.com‘]

url = ‘https://hr.tencent.com/position.php?&start=‘

offset = 0

start_urls = [url+str(offset)]

def parse(self, response):

for each in response.xpath(‘//tr[@class="even"]|//tr[class="odd"]‘):

#初始化模型对象

item = TencentItem()

# 职位名

item[‘positionname‘] = each.xpath("./td[1]/a/text()").extract()[0]

# 链接

item[‘positionlink‘] = ‘http://hr.tencent.com/‘ + each.xpath("./td[1]/a/@href").extract()[0]

# 类别

item[‘positionType‘] = each.xpath("./td[2]/text()").extract()[0]

# 招聘人数

item[‘positionNum‘] = each.xpath("./td[3]/text()").extract()[0]

# 工作地点

item[‘positioncation‘] = each.xpath("./td[4]/text()").extract()[0]

# 职位名称

item[‘positionTime‘] = each.xpath("./td[5]/text()").extract()[0]

yield item

if self.offset < 2820:

self.offset += 10

else:

raise ("程序结束")

yield scrapy.Request(self.url+str(self.offset),callback=self.parse)

5.编写pipelines.py(存储数据)

# -*- coding: utf-8 -*-

# Define your item pipelines here

#

# Don‘t forget to add your pipeline to the ITEM_PIPELINES setting

# See: https://doc.scrapy.org/en/latest/topics/item-pipeline.html

import json

class TencentPipeline(object):

def __init__(self):

self.filename = open(‘tencent.json‘,‘wb‘)

def process_item(self, item, spider):

text =json.dumps(dict(item),ensure_ascii=False) + ‘,\n‘

self.filename.write(text.encode(‘utf-8‘))

return item

def close_spider(self):

self.filename.close()

6.编写settings.py(设置headers,pipelines等)

robox协议

# Obey robots.txt rules ROBOTSTXT_OBEY = False

headers

DEFAULT_REQUEST_HEADERS = {

‘user-agent‘: ‘Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/68.0.3440.106 Safari/537.36‘,

‘Accept‘: ‘text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8‘,

# ‘Accept-Language‘: ‘en‘,

}

pipelines

ITEM_PIPELINES = {

‘tencent.pipelines.TencentPipeline‘: 300,

}

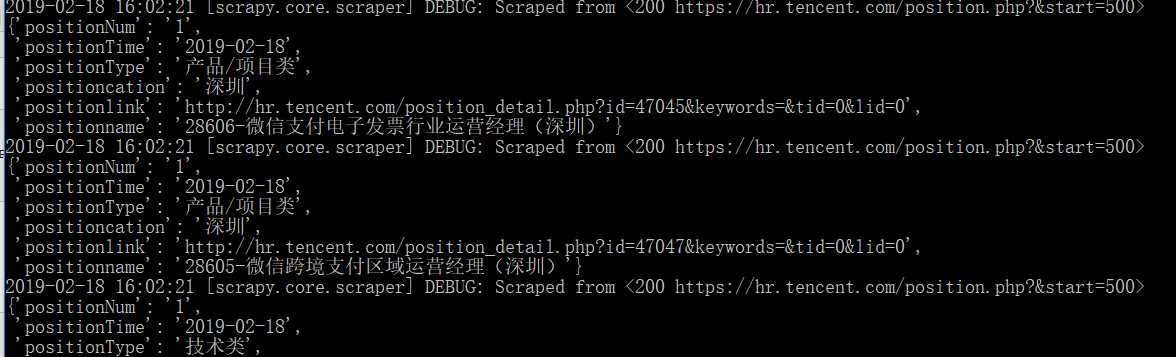

7.运行爬虫

dos窗口输入:

scrapy crawl myspider

运行结果:

查看debug:

2019-02-18 16:02:22 [scrapy.core.scraper] ERROR: Spider error processing <GET https://hr.tencent.com/position.php?&start=520> (referer: https://hr.tencent.com/position.php?&start=510)

Traceback (most recent call last):

File "E:\software\ANACONDA\lib\site-packages\scrapy\utils\defer.py", line 102, in iter_errback

yield next(it)

File "E:\software\ANACONDA\lib\site-packages\scrapy\spidermiddlewares\offsite.py", line 30, in process_spider_output

for x in result:

File "E:\software\ANACONDA\lib\site-packages\scrapy\spidermiddlewares\referer.py", line 339, in <genexpr>

return (_set_referer(r) for r in result or ())

File "E:\software\ANACONDA\lib\site-packages\scrapy\spidermiddlewares\urllength.py", line 37, in <genexpr>

return (r for r in result or () if _filter(r))

File "E:\software\ANACONDA\lib\site-packages\scrapy\spidermiddlewares\depth.py", line 58, in <genexpr>

return (r for r in result or () if _filter(r))

File "C:\Users\123\tencent\tencent\spiders\myspider.py", line 22, in parse

item[‘positionType‘] = each.xpath("./td[2]/text()").extract()[0]

去网页查看:

这个职位少一个属性- -!!!(城市套路多啊!)

那就改一下myspider.py里面的一行:

item[‘positionType‘] = each.xpath("./td[2]/text()").extract()[0]

加个判断,改为:

if len(each.xpath("./td[2]/text()").extract()) > 0:

item[‘positionType‘] = each.xpath("./td[2]/text()").extract()[0]

else:

item[‘positionType‘] = "None"

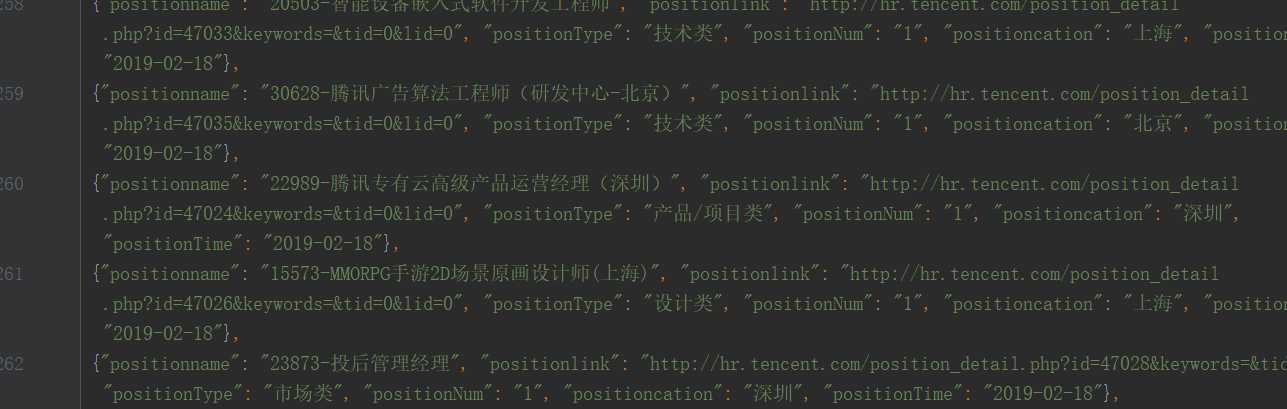

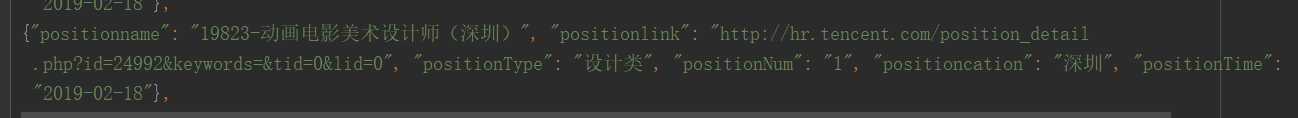

运行结果:

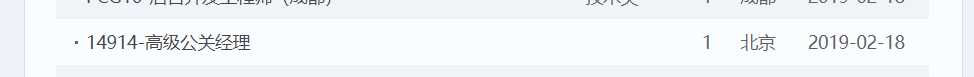

看网站上最后一页:

爬取成功!

爬虫>爬取腾讯社招信息' ref='nofollow'>

原文:https://www.cnblogs.com/shuimohei/p/10396406.html

内容总结

以上是互联网集市为您收集整理的<scrapy爬虫>爬取腾讯社招信息全部内容,希望文章能够帮你解决<scrapy爬虫>爬取腾讯社招信息所遇到的程序开发问题。 如果觉得互联网集市技术教程内容还不错,欢迎将互联网集市网站推荐给程序员好友。

内容备注

版权声明:本文内容由互联网用户自发贡献,该文观点与技术仅代表作者本人。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。如发现本站有涉嫌侵权/违法违规的内容, 请发送邮件至 gblab@vip.qq.com 举报,一经查实,本站将立刻删除。

内容手机端

扫描二维码推送至手机访问。