使用MapReduce编写的中文分词程序出现了 Exception from container-launch: org.apache.hadoop.util.Shell$ExitCodeException: 这样的问题如图:上网查了好多资料,才明白这是hadoop本身的问题,具体参考:https://issues.apache.org/jira/browse/YARN-1298https://issues.apache.org/jira/browse/MAPREDUCE-5655解决办法是重新编译hadoop具体参考:http://zy19982004.iteye.com/blog/2031172版权声明:本文为博主原创文章,未经博...

spark1(默认CDH自带版本)不存在这个问题,主要是升级了spark2(CDHparcel升级)版本安装后需要依赖到spark1的旧配置去读取hadoop集群的依赖包。1./etc/spark2/conf目录需要指向/hadoop1/cloudera-manager/parcel-repo/SPARK2-2.1.0.cloudera1-1.cdh5.7.0.p0.120904/etc/spark2/conf.dist (命令ln -s /hadoop1/cloudera-manager/parcel-repo/SPARK2-2.1.0.cloudera1-1.cdh5.7.0.p0.120904/etc/spark2/conf.dist /etc/spark2/conf...

解放方法

下来查询官方文档后,才了解到yarn的日志监控功能默认是处于关闭状态的,需要我们进行开启,开启步骤如下:

Ps:下面配置的文件的位置在hadoop根目录 etc/haddop文件夹下,比较老版本的Hadoop是在hadoop根目录下的conf文件夹中本文hadoop配置环境目录: /usr/local/src/hadoop-2.6.5/etc/hadoop

一、在yarn-site.xml文件中添加日志监控支持

<property><name>yarn.log-aggregation-enable</name><value>true</value>

</prop...

* Licensed to the Apache Software Foundation (ASF) under one* or more contributor license agreements. See the NOTICE file* distributed with this work for additional information* regarding copyright ownership. The ASF licenses this file* to you under the Apache License, Version 2.0 (the* "License"); you may not use this file except in compliance* with the License. You may obtain a copy of the Li...

在window上编程提示没有写Hadoop的权限

Exception in thread "main" org.apache.hadoop.security.AccessControlException: Permission denied: user=Mypc, access=WRITE, inode="/":fan:supergroup:drwxr-xr-x

曾经踩过的坑: 保存结果到hdfs上没有写的权限* 通过修改权限将文件写入到指定的目录下* * $HADOOP_HOME/bin/hdfs dfs -mkdir /output* $HADOOP_HOME/bin/hdfs dfs -chmod 777 /outpu...

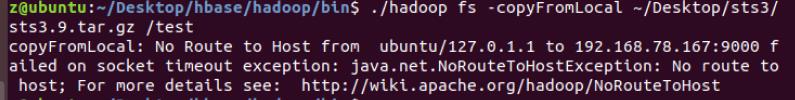

hadoop copyFromLocal 的时候报错,hadoop failed on socket timeout exception: java.net.NoRouteToHostException: No route to host

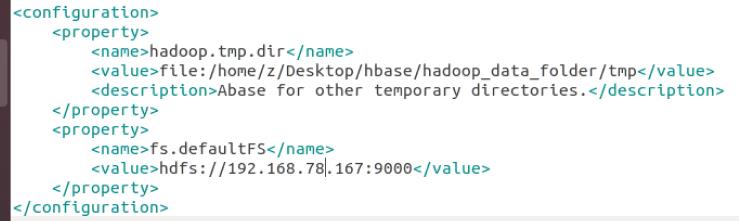

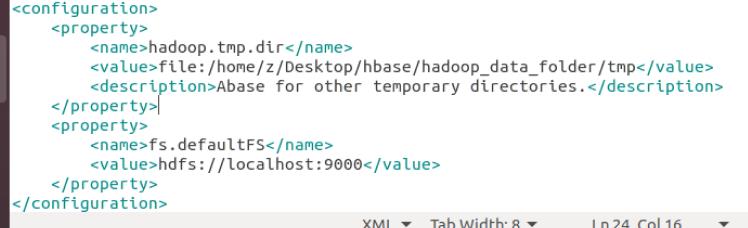

我的 core-site.xml 的配置如下:将IP地址改为 localhost 后问题解决。配置还不太熟悉,后续补充。

00:53:47,977 WARN namenode.NameNode: Encountered exception during format:

java.io.IOException: Cannot remove current directory: /home/hadoop/tmp/dfs/name/currentat org.apache.hadoop.hdfs.server.common.Storage$StorageDirectory.clearDirectory(Storage.java:433)at org.apache.hadoop.hdfs.server.namenode.NNStorage.format(NNStorage.java:579)at org.apache.hadoop.hdfs.server.namenode.NNStorage.format(NNSt...

Exception in thread "main" java.net.ConnectException: Call From DESKTOP-SP7EDPV/10.10.7.83 to hadoop102:8020 failed on connection exception: java.net.ConnectException: Connection refused: no further information; For more details see: http://wiki.apache.org/hadoop/ConnectionRefusedat sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)at sun.reflect.NativeConstructorAccessorImpl.n...

hadoop集群已经成功启动,但是在使用eclipse连接的时候还是报了这个错误

Exception while invoking getFileInfo of class ClientNamenodeProtocolTranslatorPB over node2/192.168.190.6:8020 after 5 fail over attempts.at org.apache.hadoop.ipc.Client.call(Client.java:1401) at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:232)

原因是我在windows系统的hosts文件中曾修改过ip的映射...

保存文件时权限被拒绝 曾经踩过的坑: 保存结果到hdfs上没有写的权限 通过修改权限将文件写入到指定的目录下 * * * $HADOOP_HOME/bin/hdfs dfs -chmod 777 /user * * * Exception in thread "main" org.apache.hadoop.security.AccessControlException: * Permission denied: user=Mypc, access=WRITE, * inode="/":fan:supergroup:drwxr-xr-xpackage cn.spark.study.sql;import org.apache.spark.SparkConf;

im...