GBDT之GradientBoostingClassifier源码分析

内容导读

互联网集市收集整理的这篇技术教程文章主要介绍了GBDT之GradientBoostingClassifier源码分析,小编现在分享给大家,供广大互联网技能从业者学习和参考。文章包含3328字,纯文字阅读大概需要5分钟。

内容图文

GradientBoostingClassifier

import pandas as pd

import numpy as np

import math

from sklearn.ensemble import GradientBoostingClassifier

df = pd.DataFrame([[1,-1],[2,-1],[3,-1],[4,1],[5,1],

[6,-1],[7,-1],[8,-1],[9,1],[10,1]])

X = df.iloc[:,[0]]

Y = df.iloc[:,-1]

model = GradientBoostingClassifier(n_estimators=20, learning_rate=1.0,

max_depth=1, random_state=0)

model.fit(X, Y)

print(model.predict(X))

模型初始化

第1轮加法模型不在预测为均值

def fit(self, X, y, sample_weight=None):

# pre-cond: pos, neg are encoded as 1, 0

if sample_weight is None:

pos = np.sum(y)

neg = y.shape[0] - pos

else:

pos = np.sum(sample_weight * y)

neg = np.sum(sample_weight * (1 - y))

if neg == 0 or pos == 0:

raise ValueError('y contains non binary labels.')

self.prior = self.scale * np.log(pos / neg)

计算第1轮加法模型下损失函数的负梯度(残差)

计算负梯度的公式 expit(x) = 1/(1+exp(-x))

def negative_gradient(self, y, pred, **kargs):

"""Compute the residual (= negative gradient). """

return y - expit(pred.ravel())

调整叶子结点

使用第1轮加法模型的负梯度拟合一棵回归树,但这里要调整叶子结点,每个叶子结点的输出值依赖于选用的损失函数

def update_terminal_regions(self, tree, X, y, residual, y_pred,

sample_weight, sample_mask,

learning_rate=1.0, k=0):

"""Update the terminal regions (=leaves) of the given tree and

updates the current predictions of the model. Traverses tree

and invokes template method `_update_terminal_region`.

Parameters

----------

tree : tree.Tree

The tree object.

X : ndarray, shape=(n, m)

The data array.

y : ndarray, shape=(n,)

The target labels.

residual : ndarray, shape=(n,)

The residuals (usually the negative gradient).

y_pred : ndarray, shape=(n,)

The predictions.

sample_weight : ndarray, shape=(n,)

The weight of each sample.

sample_mask : ndarray, shape=(n,)

The sample mask to be used.

learning_rate : float, default=0.1

learning rate shrinks the contribution of each tree by

``learning_rate``.

k : int, default 0

The index of the estimator being updated.

"""

# compute leaf for each sample in ``X``.

terminal_regions = tree.apply(X)

# mask all which are not in sample mask.

masked_terminal_regions = terminal_regions.copy()

masked_terminal_regions[~sample_mask] = -1

# update each leaf (= perform line search)

for leaf in np.where(tree.children_left == TREE_LEAF)[0]:

self._update_terminal_region(tree, masked_terminal_regions,

leaf, X, y, residual,

y_pred[:, k], sample_weight)

# update predictions (both in-bag and out-of-bag)

y_pred[:, k] += (learning_rate

* tree.value[:, 0, 0].take(terminal_regions, axis=0))

numerator = np.sum(sample_weight * residual)

denominator = np.sum(sample_weight * (y - residual) * (1 - y + residual))

可视化

[[1,-1],[2,-1],[3,-1],[4,1],[5,1],[6,-1],[7,-1],[8,-1],[9,1],[10,1]]

round1

第1轮加法模型不是预测为均值了

self.prior = self.scale * np.log(pos / neg)

pos 是1类数量 neg是-1类数量

np.log(4/6)=-0.4054

计算负梯度,程序会自动将-1类变为0

a = np.log(4/6)

print(0-1/(1+np.exp(-a))) -0.4

return y - expit(pred.ravel())

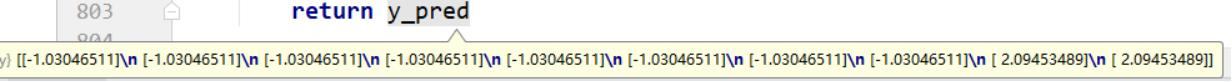

round2

使用第1轮加法模型下的负梯度拟合一棵回归树,这里就需要对叶子结点进行调整了

前8个样本被分在了左叶结点,后2个样本被分在了右叶结点

以左叶结点为例,该结点最终的输出值的计算如下:

分子/分母= -0.625,这里的学习率=1

加入加法模型后

-0.4054-0.625=-1.0304

round3

round4

…

round20

预测

score = self.decision_function(X) 封装

decisions = self.loss_._score_to_decision(score) 输出类别

return self.classes_.take(decisions, axis=0)

内容总结

以上是互联网集市为您收集整理的GBDT之GradientBoostingClassifier源码分析全部内容,希望文章能够帮你解决GBDT之GradientBoostingClassifier源码分析所遇到的程序开发问题。 如果觉得互联网集市技术教程内容还不错,欢迎将互联网集市网站推荐给程序员好友。

内容备注

版权声明:本文内容由互联网用户自发贡献,该文观点与技术仅代表作者本人。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。如发现本站有涉嫌侵权/违法违规的内容, 请发送邮件至 gblab@vip.qq.com 举报,一经查实,本站将立刻删除。

内容手机端

扫描二维码推送至手机访问。