Hadoop Java Hdfs API 练习

内容导读

互联网集市收集整理的这篇技术教程文章主要介绍了Hadoop Java Hdfs API 练习,小编现在分享给大家,供广大互联网技能从业者学习和参考。文章包含6096字,纯文字阅读大概需要9分钟。

内容图文

1. 在本地文件系统生成一个文本文件,,读入文件,将其第101-120字节的内容写入HDFS成为一个新文件

2. 在HDFS中生成文本文件,读入这个文件,将其第101-120字节的内容写入本地文件系统成为一个新文件

环境部署:http://www.cnblogs.com/dopeter/p/4630791.html

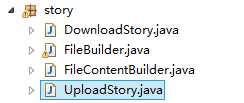

FileBuilder.java

生成文件的工具类,包含在本地生成文件,在Hadoop生成文件,读取Hadoop指定目录的文件

1

package

story;

2

3

import

java.io.ByteArrayInputStream;

4

import

java.io.ByteArrayOutputStream;

5

import

java.io.FileNotFoundException;

6

import

java.io.FileWriter;

7

import

java.io.IOException;

8

import

java.io.InputStream;

9

import

java.io.OutputStream;

10

import

java.io.PrintWriter;

11

import

java.io.UnsupportedEncodingException;

12

import

java.net.URI;

13

14

import

org.apache.hadoop.conf.Configuration;

15

import

org.apache.hadoop.fs.FileSystem;

16

import

org.apache.hadoop.fs.Path;

17

import

org.apache.hadoop.io.IOUtils;

18

import

org.apache.hadoop.util.Progressable;

19

20

public

class

FileBuilder {

21

22

//

build default test data

23

public

static

String BuildTestFileContent()

24

{

25 StringBuilder contentBuilder=new StringBuilder();

26 27for(int loop=0;loop<100;loop++)

28 contentBuilder.append(String.valueOf(loop));

29 30 String content =contentBuilder.toString();

31 32return content;

33 }

34 35//build local file 36publicstaticvoid BuildLocalFile(String buildPath,String content) throws FileNotFoundException, UnsupportedEncodingException

37 {

38/* 39 FileWriter fileWriter;

40 try {

41 fileWriter = new FileWriter(buildPath);

42 43 fileWriter.write(content);

44 fileWriter.close();

45 } catch (IOException e) {

46 e.printStackTrace();

47 }

48*/ 49 50 51 52 PrintWriter out = new java.io.PrintWriter(new java.io.File(buildPath), "UTF-8");

53 String text = new java.lang.String(content);

54 out.print(text);

55 out.flush();

56 out.close();

57 58 }

59 60//upload file to hadoop 61publicstaticvoid BuildHdfsFile(String buildPath,byte[] fileContent) throws IOException

62 {

63//convert to inputstream 64 InputStream inputStream=new ByteArrayInputStream(fileContent);

65 66//hdfs upload 67 Configuration conf = new Configuration();

68 69 FileSystem fs = FileSystem.get(URI.create(buildPath), conf);

70 OutputStream outputStream = fs.create(new Path(buildPath), new Progressable() {

71publicvoid progress() {

72 System.out.print(".");

73 }

74 });

75 76 IOUtils.copyBytes(inputStream, outputStream, fileContent.length, true);

77 }

78 79//wrapper for upload file 80publicstaticvoid BuildHdfsFile(String buildPath,String fileContent) throws IOException

81 {

82 BuildHdfsFile(buildPath,fileContent.getBytes());

83 }

84 85//download file from hadoop 86publicstaticbyte[] ReadHdfsFile(String readPath)throws IOException

87 {

88byte[] fileBuffer;

89 Configuration conf = new Configuration();

90 FileSystem fs = FileSystem.get(URI.create(readPath), conf);

91 InputStream in = null;

92 ByteArrayOutputStream out=new ByteArrayOutputStream();

93try {

94 in = fs.open(new Path(readPath));

95 IOUtils.copyBytes(in, out, 4096, false);

96 97 fileBuffer=out.toByteArray();

98 } finally {

99 IOUtils.closeStream(in);

100 }

101102return fileBuffer;

103 }

104105 }

FileContentHandler.java

文件内容的处理类,读取本地文件时设置起始Position与截取的长度,读取从Hadoop下载的文件时设置起始Position与截取的长度

1

package

story;

2

3

import

java.io.IOException;

4

import

java.io.RandomAccessFile;

5

import

java.io.UnsupportedEncodingException;

6

7

public

class

FileContentHandler {

8

public

static

byte[] GetContentByLocalFile(String filePath,long beginPosition,int readLength)

9 {

10int readBufferSize=readLength;

11byte[] readBuffer=newbyte[readBufferSize];

1213 RandomAccessFile accessFile;

14try {

15 accessFile=new RandomAccessFile (filePath,"r");

16long length=accessFile.length();

17 System.out.println(length);

1819if(length>beginPosition&&length>beginPosition+readBufferSize)

20 {

21 accessFile.seek(beginPosition);

22 accessFile.read(readBuffer);

23 accessFile.close();

24 }

25 } catch ( IOException e) {

26// TODO Auto-generated catch block27 e.printStackTrace();

28 }

2930return readBuffer;

31 }

3233publicstatic String GetContentByBuffer(byte[] buffer,int beginPosition,int readLength) throws UnsupportedEncodingException

34 {

35 String content;

36byte[] subBuffer=newbyte[readLength];

37for(int position=0;position<readLength;position++)

38 subBuffer[position]=buffer[beginPosition+position];

3940 buffer=null;

4142 content=new String(subBuffer,"UTF-8");

43 System.out.println(content);

4445return content;

46 }

4748 }

UploadStory.java

1的流程代码

1

package

story;

2

3

public

class

UploadStory {

4

5

//

public static void main(String[] args) throws Exception {}

6

7

public

static

void main(String[] args) throws Exception {

8//also define value of parameter from arguments. 9 String localFilePath="F:/bulid.txt";

10 String hdfsFilePath="hdfs://hmaster0:9000/user/14699_000/input/build.txt";

11int readBufferSize=20;

12long fileBeginReadPosition=101;

1314//upload story begin.

1516//build local file 17 FileBuilder.BuildLocalFile(localFilePath,FileBuilder.BuildTestFileContent());

18//read file 19byte[] uploadBuffer=FileContentHandler.GetContentByLocalFile(localFilePath, fileBeginReadPosition, readBufferSize);

20//upload 21if(uploadBuffer!=null&&uploadBuffer.length>0)

22 FileBuilder.BuildHdfsFile(hdfsFilePath, uploadBuffer);

2324 }

2526 }

DownloadStory.java

2的流程代码

1

package

story;

2

3

public

class

DownloadStory {

4

5

//

public static void main(String[] args) throws Exception { }

6

7

8

public

static

void main(String[] args) throws Exception {

9//also define value of parameter from arguments.10 String localFilePath="F:/bulid.txt";

11 String hdfsFilePath="hdfs://hmaster0:9000/user/14699_000/input/build2.txt";

12int readBufferSize=20;

13int fileBeginReadPosition=101;

1415//build file to hadoop16 FileBuilder.BuildHdfsFile(hdfsFilePath, FileBuilder.BuildTestFileContent());

1718//download file 19byte[] readBuffer=FileBuilder.ReadHdfsFile(hdfsFilePath);

2021//handle buffer22 String content=FileContentBuilder.GetContentByBuffer(readBuffer, fileBeginReadPosition, readBufferSize);

2324//write to local file25 FileBuilder.BuildLocalFile(localFilePath, content);

26 }

2728 }

原文:http://www.cnblogs.com/dopeter/p/4631840.html

内容总结

以上是互联网集市为您收集整理的Hadoop Java Hdfs API 练习全部内容,希望文章能够帮你解决Hadoop Java Hdfs API 练习所遇到的程序开发问题。 如果觉得互联网集市技术教程内容还不错,欢迎将互联网集市网站推荐给程序员好友。

内容备注

版权声明:本文内容由互联网用户自发贡献,该文观点与技术仅代表作者本人。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。如发现本站有涉嫌侵权/违法违规的内容, 请发送邮件至 gblab@vip.qq.com 举报,一经查实,本站将立刻删除。

内容手机端

扫描二维码推送至手机访问。