吴裕雄 python 机器学习-DMT(1)

内容导读

互联网集市收集整理的这篇技术教程文章主要介绍了吴裕雄 python 机器学习-DMT(1),小编现在分享给大家,供广大互联网技能从业者学习和参考。文章包含3486字,纯文字阅读大概需要5分钟。

内容图文

import

numpy as np

import

operator as op

from math import log

def createDataSet():

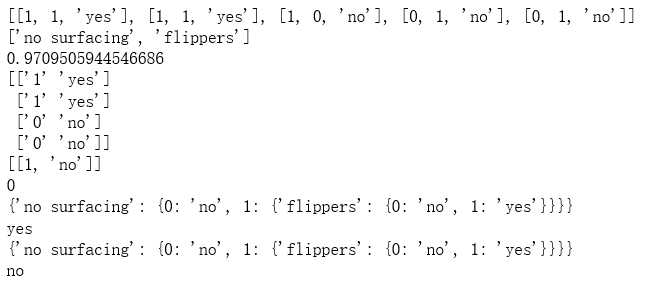

dataSet = [[1, 1, ‘yes‘],

[1, 1, ‘yes‘],

[1, 0, ‘no‘],

[0, 1, ‘no‘],

[0, 1, ‘no‘]]

labels = [‘no surfacing‘,‘flippers‘]

return dataSet, labels

dataSet,labels = createDataSet()

print(dataSet)

print(labels)

def calcShannonEnt(dataSet):

labelCounts = {}

for featVec in dataSet:

currentLabel = featVec[-1]

if(currentLabel notin labelCounts.keys()):

labelCounts[currentLabel] = 0

labelCounts[currentLabel] += 1

shannonEnt = 0.0

rowNum = len(dataSet)

for key in labelCounts:

prob = float(labelCounts[key])/rowNum

shannonEnt -= prob * log(prob,2)

return shannonEnt

shannonEnt = calcShannonEnt(dataSet)

print(shannonEnt)

def splitDataSet(dataSet, axis, value):

retDataSet = []

for featVec in dataSet:

if(featVec[axis] == value):

reducedFeatVec = featVec[:axis]

reducedFeatVec.extend(featVec[axis+1:])

retDataSet.append(reducedFeatVec)

return retDataSet

retDataSet = splitDataSet(dataSet,1,1)

print(np.array(retDataSet))

retDataSet = splitDataSet(dataSet,1,0)

print(retDataSet)

def chooseBestFeatureToSplit(dataSet):

numFeatures = np.shape(dataSet)[1]-1

baseEntropy = calcShannonEnt(dataSet)

bestInfoGain = 0.0

bestFeature = -1

for i in range(numFeatures):

featList = [example[i] for example in dataSet]

uniqueVals = set(featList)

newEntropy = 0.0

for value in uniqueVals:

subDataSet = splitDataSet(dataSet, i, value)

prob = len(subDataSet)/float(len(dataSet))

newEntropy += prob * calcShannonEnt(subDataSet)

infoGain = baseEntropy - newEntropy

if (infoGain > bestInfoGain):

bestInfoGain = infoGain

bestFeature = i

return bestFeature

bestFeature = chooseBestFeatureToSplit(dataSet)

print(bestFeature)

def majorityCnt(classList):

classCount={}

for vote in classList:

if(vote notin classCount.keys()):

classCount[vote] = 0

classCount[vote] += 1

sortedClassCount = sorted(classCount.items(), key=op.itemgetter(1), reverse=True)

return sortedClassCount[0][0]

def createTree(dataSet,labels):

classList = [example[-1] for example in dataSet]

if(classList.count(classList[0]) == len(classList)):

return classList[0]

if len(dataSet[0]) == 1:

return majorityCnt(classList)

bestFeat = chooseBestFeatureToSplit(dataSet)

bestFeatLabel = labels[bestFeat]

myTree = {bestFeatLabel:{}}

del(labels[bestFeat])

featValues = [example[bestFeat] for example in dataSet]

uniqueVals = set(featValues)

for value in uniqueVals:

subLabels = labels[:]

myTree[bestFeatLabel][value] = createTree(splitDataSet(dataSet, bestFeat, value),subLabels)

return myTree

myTree = createTree(dataSet,labels)

print(myTree)

def classify(inputTree,featLabels,testVec):

for i in inputTree.keys():

firstStr = i

break

secondDict = inputTree[firstStr]

featIndex = featLabels.index(firstStr)

key = testVec[featIndex]

valueOfFeat = secondDict[key]

if isinstance(valueOfFeat, dict):

classLabel = classify(valueOfFeat, featLabels, testVec)

else:

classLabel = valueOfFeat

return classLabel

featLabels = [‘no surfacing‘, ‘flippers‘]

classLabel = classify(myTree,featLabels,[1,1])

print(classLabel)

import pickle

def storeTree(inputTree,filename):

fw = open(filename,‘wb‘)

pickle.dump(inputTree,fw)

fw.close()

def grabTree(filename):

fr = open(filename,‘rb‘)

return pickle.load(fr)

filename = "D:\\mytree.txt"

storeTree(myTree,filename)

mySecTree = grabTree(filename)

print(mySecTree)

featLabels = [‘no surfacing‘, ‘flippers‘]

classLabel = classify(mySecTree,featLabels,[0,0])

print(classLabel)

原文:https://www.cnblogs.com/tszr/p/10148597.html

内容总结

以上是互联网集市为您收集整理的吴裕雄 python 机器学习-DMT(1)全部内容,希望文章能够帮你解决吴裕雄 python 机器学习-DMT(1)所遇到的程序开发问题。 如果觉得互联网集市技术教程内容还不错,欢迎将互联网集市网站推荐给程序员好友。

内容备注

版权声明:本文内容由互联网用户自发贡献,该文观点与技术仅代表作者本人。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。如发现本站有涉嫌侵权/违法违规的内容, 请发送邮件至 gblab@vip.qq.com 举报,一经查实,本站将立刻删除。

内容手机端

扫描二维码推送至手机访问。